I read a very interesting post the other day, "The Fine Print," written by one of my favorite heath care blogging colleagues, Margalit Gur-Arie, regarding the

I read a very interesting post the other day, "The Fine Print," written by one of my favorite heath care blogging colleagues, Margalit Gur-Arie, regarding the

"alleged contracting practices of EHR vendors and their notorious “hold harmless” clauses, which indemnify the EHR vendor from all liability due to software defects, including liability for personal injury and death of patients. What this means in plain English is that if a software “bug” or incompetency caused an adverse event, and if you (or your hospital) are faced with a malpractice suit, the EHR vendor cannot be named a co-defendant in that suit and you cannot turn around and bring suit against the vendor for failure to deliver a properly functioning product."

"The AMIA paper also asserts the existence of contractual terms preventing users and purchasers from publicly reporting, or even mentioning, software defects, including ones that may endanger patient safety..."

Wow. Assertions of blanket indemnity, coupled with a "gag order"? Is this something regarding which our REC provider clients be made aware during EHR vendor selection and contract negotiation? (Beyond things such as practice "data ownership" and -- relatedly -- EHR "source code escrow"?) How substantive is the liability concern?

The grist for this post was the recent publication of an American Medical Informatics Association (AMIA) Board Position Paper entitled "Challenges in ethics, safety, best practices, and oversight regarding HIT vendors, their customers, and patients: a report of an AMIA special task force." (PDF)

"...Some vendors incorporate contract language whereby purchasers of HIT systems, such as hospitals and clinics, must indemnify vendors for malpractice or personal injury claims, even if those events are not caused or fostered by the purchasers. Some vendors require contract clauses that force HIT system purchasers to adopt vendor-defined policies that prevent the disclosure of errors, bugs, design flaws, and other HIT-software-related hazards..."

One commenter made this observation on Margalit's post:

"I'm still waiting to see an installation of any EMR be fully tested by either the vendor or the organization who purchased it."

Indeed.

All of which set me to reflecting on my first professional technical paper, written in 1988 during my tenure as an environmental radiation lab programmer and quality control analyst.

While those days were a time prior to indoor plumbing in IT terms, some of the points still resonate. As I began the paper:

While those days were a time prior to indoor plumbing in IT terms, some of the points still resonate. As I began the paper:

"... [vendor] makes no representations or warranties with respect to the content hereof, and specifically disclaims any implied warranties or merchantability or fitness for any particular purpose for both this manual and the product it describes. Furthermore, [vendor] reserves the right to revise this publication and make changes in the content hereof without obligation to notify any person of any such revision or changes..."

"In less than a decade, microcomputers and software applications have become ubiquitous, indispensable tools in business, industry, and the sciences. The end-user faces a bewildering array of options with respect to makes, models, peripherals, and software compilers, libraries, firmware, and applications packages. since the foregoing disclaimer may be found in the product documentation of virtually every commercial microcomputer hardware and software vendor, the end-user must blend the array of options, possible algorithmic deficiences, and system incompatibilities into a comprehensive product. The user assumes -- usually unwittingly -- the cumulative responsibility for assuring a quality output..."

Twenty three years later, the core liability concerns remain. And, still, the "user assumes -- usually unwittingly -- the cumulative responsibility for assuring a quality output."

Twenty three years later, the core liability concerns remain. And, still, the "user assumes -- usually unwittingly -- the cumulative responsibility for assuring a quality output."

The lab wherein I worked did a signification volume of "forensic-level" analysis, i.e., much of our output was destined for use as evidence in radiation contamination and dose-exposure liability litigation. Consequently, we turned over every rock, pebble, and grain of sand in search of conditions inimical to legally-defensible data "quality." Our Technical Director in particular, Dr. James Dillard (my mentor on this and other projects), had amonished us to never take computer-generated results at face value. He was fond of saying "you get what you INspect, not what you EXpect." As I note on my website preface citing and linking my paper:

You enter some numerical data into a computer and get some results back out. Do you simply assume they are "accurate" and report them to the client? Not in our lab. For example, even assuming your data and formula/function entries into a spreadsheet are correct, does it thereby necessarily invariably follow that the calculated results will be so?

And so it came to be express policy (reflected in our "IT/ORL Software Quality Assurance SOP" I had a hand in writing) that we thoroughly test every software application -- in-house developed and commercial (off-the-shelf and 3rd-party custom-developed alike) -- and every computer wherein they would be installed for use in generating client-reportable results.

Complex as all of that was, those were the bucolic ancien days of relative computing simplicity. Today's hyper-connected, mobile world of 24/7 exponentially increasing digital apps and platforms -- from the client-server to the "Cloud" -- presents a potential host of new challenges.

Given the considerable complexities comprising Health Information Technology (very little of which go to actual mathematical computing, it should be noted), these challenges are already within the crosshairs of the Medical Liability people. Recall, again, my prior citation of the Brouillard article.

Brouillard concludes:

"Although EHRs have now achieved mainstream, clinical adoption, EHR-related liability trends have not developed fully. At this early point, we can discern some potential liability areas. In an early EHR implementation stage, source of truth issues and expansion of liability issues may arise. In using EHR systems, the evolving standards of care for clinical documentation and work-arounds pose risks. Security as mandated by data breach laws or retention and storage issues involving e-discovery liability and data integrity have also emerged as important areas."

Consequently, it is rather unsurprising that Counsel for EHR vendors would insist on boilerplate blanket "Hold Harmless" beg-offs. I rather doubt such stipulations would survive the first serious court test, given a case (assuming a jury trial) wherein a patient was harmed as a result of a documentable software flaw that prevented a provider from being made aware of an exigent patient circumstance (or induced a provider to take injurious action she would otherwise have not absent the software flaw). Moreover, I am with AMIA on this point:

"f. “Hold harmless” clauses in contracts between Electronic Health Application vendors and purchasers or clinical users, if and when they absolve the vendors of responsibility for errors or defects in their software, are unethical." [pg 3]

Indeed.

To be fair, one principal reason for attempts at fine print "hold harmless" inoculation goes to the civil litigation reality of "Joint and Several Liability" -

Joint and several liability is a form of liability that is used in civil cases where two or more people are found liable for damages. The winning plaintiff in such a case may collect the entire judgment from any one of the parties, or from any and all of the parties in various amounts until the judgment is paid in full. In other words, if any of the defendants do not have enough money or assets to pay an equal share of the award, the other defendants must make up the difference.

e.g., if you are found to be perhaps only, say, one percent "liable" (exacerbated by the fact that civil liability is determined by subjective "more-likely-than-not" "preponderance" criteria), but your relatively deep pockets finds you with 100% of the attachable assets via which to satisfy a judgment, well...

So, what're really perhaps at play here are the relative deep pockets of EHR vendors vis a vis the materially shallower ones of individual potential defendant physicians.

UPDATE:

TIMELY TOPICAL NOTES FROM THE NEW ENGLAND JOURNAL

"Medical errors and adverse events may result from individual mistakes in using EHRs (e.g., incorrectly entering information into the electronic record) or system-wide EHR failures or “bugs” that create problems in care processes (e.g., “crashes” that prevent access to crucial information)." [pg 2061]

'...as the use of EHRs grows, failure to adopt an EHR system may constitute a deviation from the standard of care. The standard of care is usually defined by reference to what is customary among physicians in the same specialty in similar settings. Once a critical mass of providers adopts EHRs, others may need to follow..." [pg 2065]

Medical Malpractice Liability in the Age of Electronic Health Records Sandeep S. Mangalmurti, M.D., J.D., Lindsey Murtagh, J.D., M.P.H., and Michelle M. Mello, J.D., Ph.D. n engl j med 363;21 nejm.org November 18, 2010

So, given that the ever-wider-spread deployment of HIT is seemingly inevitable (and, a prospect which I obviously support), what are the some of the truly salient liability risk concerns?

- EHR "Usability" issues (PDF) that might contribute to inadequately "idiot-trapped" data input errors (including mistakes and omissions);

- Code logic flaws that could lead to exigent "alerts" missed (or, conversely, irritating recurrent "false positive" alerts that precipitate user cynicism and apathy);

- Relatedly, "clinical decision support" logic flaws (or simple inadequacies);

- More general code flaws resulting in "crash" prone systems, leaving clinicians potentially in the lurch during time-sensitive points of care;

- OS and other incompatibilities (including adverse interactions with other resident apps);

- Math errors.

As I've previously noted, EHRs typically do very little outright math of any appreciable sophistication, so I put that last on the list (though that may indeed a bit change over time -- apps going beyond, e.g., simply doing BMI arithmetic, lab value averages, and growth chart plotting, etc). The typical EHR (at least of the ambulatory variety) is really just usually a Java or C++ (or otherwise ".net") coded GUI front end app sitting atop and hooking into a relatively complex RDBMS (typically a multi-table SQL relational database these days), one whose principal purpose is to record and then re-display (either onscreen or in print) the myriad requisite subsets of administrative and clinical data as efficiently as possible (ideally).

Nonetheless, the systems are indeed extremely transactionally complex, and, absent thorough and consistent QA (including industry consensus stds? FDA oversight?), they could be vulnerable to a host of "gremlins," the upshot of which could range from the merely exasperating-to-the-workflow to the punitive "joint-and-several liability" class-action judgment in the wake of patient harms.

Nonetheless, the systems are indeed extremely transactionally complex, and, absent thorough and consistent QA (including industry consensus stds? FDA oversight?), they could be vulnerable to a host of "gremlins," the upshot of which could range from the merely exasperating-to-the-workflow to the punitive "joint-and-several liability" class-action judgment in the wake of patient harms.

I have to note yet again that ONC-ATCB EHR "certification" for Meaningful Use has to do exclusively with an application's ability to reliably record and regurgitate the MU measures, in a HIPAA-compliant manner. Nothing more. Nothing pertaining to application "quality" more broadly (and in the more truly "meaningful" sense of efficient, "usable" functionality).

I will be watching the developing law here with interest. I would also exhort all vendors and users alike to think long and hard proactively about the considerable breadth of EHR "software quality" issues.

FYI:

A NEW ANTI "HOLD HARMLESS" WEBSITE

"Physicians and other healthcare providers are increasingly relying on EHR systems for the practice of medicine. As the number of EHR system providers increases and as these systems integrate with other systems to import and exchange data, it is important to track and understand issues of concern as they develop. This will, in-turn, allow for improvement in EHRs, in patient safety, and may result in liability reduction..."

Interesting: www.ehrevent.org___

DEC 7th UPDATE

I was sifting through papers in my office today, and ran back across this law journal article I'd read a while back (PDF) and had given the thorough yellow highlighter treatment.

I should have cited this by now. Just slipped my attention. It antedates both the Brouillard and NEJM pieces. It's the first place wherein I ran across a call for FDA (or some federal entity) regulation of EHRs.

I should have cited this by now. Just slipped my attention. It antedates both the Brouillard and NEJM pieces. It's the first place wherein I ran across a call for FDA (or some federal entity) regulation of EHRs....the novel and significant risks generated by EHR systems cannot be ignored. Products with poor information display and navigation can impede rather than facilitate providers’ work. The growing capabilities of EHR systems require increasingly complex software, which heightens the danger of software failures that may harm patients...

...Thus far, the legal literature has not assessed the need for careful regulatory oversight of EHR systems akin to that required, in principle, by the Food and Drug Administration (“FDA”) for life-critical medical devices. This Article begins to fill that gap. It analyzes EHR systems from both legal and technical perspectives and examines how law can serve as a tool to promote HIT. Extensive regulations already exist to govern the privacy and security of electronic health information. Privacy and security, however, are only two of the concerns that merit regulatory attention. Perhaps even more important are the safety and efficacy of these life-critical systems. [pp. 106-107]

A good read. And, in light of the more recent news of EHR vendor "Hold Harmless" attempts, this observation near the end of the monograph is interesting:

...the threat of product liability or medical malpractice litigation could deter misconduct by both EHR system vendors and health care providers. Plaintiffs may sue providers if they suspect that they suffered poor outcomes because providers failed to implement or properly use EHR systems, for example, by neglecting to utilize decision-support features that may have averted a medical mistake. Likewise, plaintiffs might name EHR system vendors as defendants if they believe the harm is rooted at least partly in a design flaw, and health care providers might bring in vendors as third party defendants if they believe the vendors to be partially at fault. Audit logs and capture/replay would be helpful to all parties in investigating and proving their claims concerning system failures and provider negligence or lack thereof. [pg 161]

Do no "Hold Harmless."

___

FIRST, A SERENDIPITOUS PRODUCT DURABILITY REPORT

The Ingenix 2 gig USB flash drive is apparently machine-washable. Tumble dry, medium heat. LOL. I can't believe I did that. Found it in my cargo jeans pocket after the dryer stopped. Didn't lose a single file. Wow. (It was a freebie I got a while back at some HIT marketing event; has an Ingenix "CareTracker" demo on it. This is not to compromise my REC "vendor neutrality," BTW.)

___

REC "PPCP" RECRUITING TO DATE

No one at ONC really wants to talk about it for the public record these days (nor even much privately). One number that wafted my way recently was ~17,000 sign-ups nationally to date. "PPCP" means "Priority Primary Care Providers," who can get federally subsidized REC soup-to-nuts consulting/facilitation services during the first two years of the HITECH program. Eight months in, then, this means that REC "enrollment" (which is optional, recall) is running only about 50% of target-to-date in the aggregate, in light of the national goal to bring 100,000 primary care providers "meaningfully" on board within two years.

REC provider recruitment barriers persist, obviously - to wit,

- EHR cost and concomitant ROI dubiety (given productivity loss and "everyone-benefits-except-me" concerns);

- Skepticism that the Meaningful Use reimbursement money will actually be forthcoming (particularly in the wake of the 2010 mid-term election outcomes -- with Republicans now loudly vowing to de-fund everything associated with "ObamaCare");

- Specific physician anxiety and anger regarding the yet-again pending draconian Medicare reimbursement cuts;

For example: "Unless Congress acts, physicians are just weeks away from taking a 23% cut in Medicare reimbursements mandated by the sustainable growth rate (SGR) formula. The cut is scheduled to go into effect on Dec. 1 and will be followed on Jan. 1 with an additional cut, bringing the total to 25%."

- Medicaid provider participation remains an acute concern. Some states (including my own) are considering dropping out of the Medicaid program, in light of rapidly increasing patient enrollment (owing to our record high and seemingly intractable unemployment rate) concomitant with reductions in provider reimbursements during a difficult time of state budget deficits. Even should this not happen, their participation in the HITECH Meaningful Use program is voluntary, and not fully federally funded (states have to submit a "plan" to the feds, and come up with an unfunded 10% of "reasonable administrative expenses");

- More broadly, antipathy toward anything related to the motives of "the government," one being the allegation that this is all about the feds wanting to be able to easily gather all of our medical information in order to dictate [1] what treatments we can obtain (and how doctors must perform them) and [2] what we can eat (Michelle Obama's menacing BMI Violator Celery Stick Police);

- Vendor, VAR, and commercial consultant assertions that "you don't need REC help, we'll get you to MU" (owing to that many of us have to charge subscription, hourly, or back-end fees, given that the HITECH subsidy is not 100% and is incrementally "milestone" based);

- General resistance-to-change inertia. As my mentor Dr. Brent James was fond of saying, "the only person who enjoys change is a baby with a wet diaper."

etc. It's all getting a lot of news coverage these days, pro and con.

"...Noting that the private sector has not been able to develop and adopt methods for significantly improving health outcomes in the U.S., [Paul Tang, MD] called recent federal legislation "transformative" by providing the goals, timetables, and financial incentives necessary to achieve desired change. "All the necessary forces are aligned," he said.

The Affordable Care Act (ACA), and the American Recovery and Reinvestment Act (ARRA) of 2009 share many of the same requirements for achieving and documenting cost and quality of care criteria, said Tang, and both will depend on the "meaningful use" of certified EHRs targeted by ARRA for widespread use by 2014. Meaningful use requirements address key health goals, including improved quality, safety and efficiency; engaging patients and their families; improving care coordination; improving population and public health; and ensuring privacy and security protections.

Tang was a keynote speaker at the MedeAnalytics Clinical Leadership Summit held Nov. 11-12 at the Fairmont Hotel in San Francisco."

Notwithstanding the truth of the foregoing assertions, I detect increasing wafts of circle-the-wagons angst, given that The Tea Party Cometh soon to the halls of Congress. While some feel that HITECH and the RECs will be safe from the 2011 legislative truncheon, I am not so sure.

HIT (and REC assistance) continues to be a tough sell, at least from my perspective in Nevada (but, in fairness, my state is the fiscal basket case of the nation; highest unemployment rate, highest mortgage foreclosure rate; 80% of non-foreclosed residential mortgages "under water," largest state budget deficit in the nation, proportionally, etc. A swell place to live these days).

I had a bit of irascible Photoshop commentary fun with this entire "recruitment" notion early on.

Whatever. They ostensibly re-hired me for the Meaningful Use Adoption Support "technical assistance"/QI effort, upon which I really want to focus my energy in lieu of all this "sales rep" stuff.

Whatever. They ostensibly re-hired me for the Meaningful Use Adoption Support "technical assistance"/QI effort, upon which I really want to focus my energy in lieu of all this "sales rep" stuff.

___

OUR CORE ADOPTION SUPPORT M.O.

The minimal essence I fought hard internally to establish. It was not universally loved within my REC team, having been deemed in some incumbent quarters too "old school / industrial." Right. Click the image to enlarge.

Rather simple, conceptually (props to my QA Guru wife for giving me her SOP template to use). My argument (which prevailed in the end) was that, should we manage nothing else beyond getting our client providers to Stage One Meaningful Use compliance, we would have met both our ONC and client-contractual obligations. Simply a matter of exigent priority, i.e.,

- Document and visualize the workflow components for each Meaningful Use criterion, as dictated by each EHR platform;

- Write it all up in a "Standard Operating Procedure" (SOP*) for client distribution;

- Include in the SOPs the sets of "screen shots" (where relevant) that can navigate any authorized users to the target MU "money fields" (where the data have to be consistently recorded).

[* We tend to soft-pedal to a degree the "SOP" thing, using the "softer" phrase "Standard Work" that is part of the "Lean Methodology" lexicon. "SOPs" conjure up MEGO inducing images of dull ring binder "Policies and Procedures Manuals" that typically sit on shelves unopened once written.]

As I have stated before, I would favor laying this sort of thing off on the EHR vendors (given that they know their products -- specifically the MU documentation navigation paths within their respective systems, and that such are -- implicitly, anyway -- part of ONC Certification), leaving RECs the time to concentrate on actual "process improvement" via which to make the entire effort a bottom line winner for the providers.

In fact, were I setting ONC EHR Cert policy, I would have made such explicit instructions part of certification requirements. Perhaps once "permanent certification Registrar" requirements are set forth, such will be the case. I will certainly lobby for it. But, I won't be holding my breath.

EHR WORKFLOW,

TASK TIME-TO-COMPLETION CONCERNS

You might recall my earlier blog post reflections regarding how Meaningful Use compliance could easily consume so much additional FTE labor cost as to negate the incentive reimbursements. "Use" case in point, from a study I found posted on our HITRC (again, click the image to enlarge).

I'm just too sorry: THIRTY FIVE seconds to document a CPOE lab order (in the VistA EHR)? OK, annualize that, extrapolating from a couple of dozen patients per day. It's not viable. As I've pointed out elsewhere on this blog, just adding an aggregate additional couple of minutes labor per chart documenting all the Meaningful Use criteria will serve to nullify the reimbursement payments. Hence my personal imperative of trying to inculcate "Lean" principles within my client clinics as I work with them in order to find ways to decrement the workflow FTE burden overall in support of effective HIT adoption.

I'm just too sorry: THIRTY FIVE seconds to document a CPOE lab order (in the VistA EHR)? OK, annualize that, extrapolating from a couple of dozen patients per day. It's not viable. As I've pointed out elsewhere on this blog, just adding an aggregate additional couple of minutes labor per chart documenting all the Meaningful Use criteria will serve to nullify the reimbursement payments. Hence my personal imperative of trying to inculcate "Lean" principles within my client clinics as I work with them in order to find ways to decrement the workflow FTE burden overall in support of effective HIT adoption.

___

RE: "LEAN HEALTH CARE" AN UNSOLICITED SHOUT-OUT

Nice resource. Click the image to visit their site.

Nice resource. Click the image to visit their site.

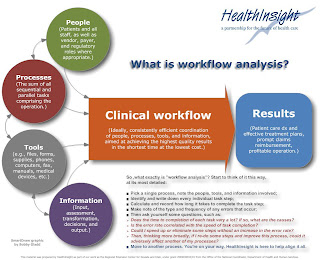

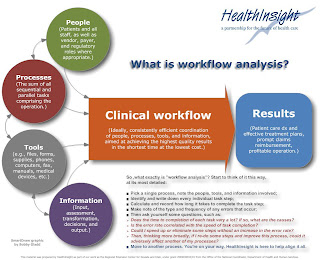

MY ONE-PAGE SUMMARY TAKE ON "WORKFLOW"

Click the image to enlarge.

Click the image to enlarge.

SOME THOUGHTS ON "LEAN TRANSFORMATION"

Returning for a moment to the excellent book "On The Mend."

"Lean transformation is all about Dr. Deming’s Plan Do Study Act (PDSA), otherwise known as the scientific method. There is no simple formula to copy and no quick path to success. Instead you must perform your own experiments— tailored to the mission and circumstances of your organization. And then you must honestly study the results and act on your findings, including sharing them with the healthcare community.

Yeah, "PDSA." All the rage in progressive process QI circles for quite some time now (and, color me a believer, as I stated early on).

I would revise to acronym, however, to "SPDSA," i.e., "STUDY, Plan-Do-Study-Act." At the risk of belaboring a point that may be implicit in the minds of most people involved in this work (i.e, that initial quantitative baseline assessment "study" is reflexively included in the "Plan" phase if it's truly "scientific"), I never lose sight of the source, the late Dr. Deming himself:

"Statistical control. A stable process, one with no indication of a special cause of variation, is said to be, following Shewhart, in statistical control, or stable. It is a random process. Its behavior in the near future is predictable. Of course, some unforeseen jolt may come along and knock the process out of statistical control. A system that is in statistical control has a definable identity and a definable capability (see the section "Capability of the process," infra).

In the state of statistical control, all special causes so far detected have been removed. The remaining variation must be left to chance -- that is, to common causes -- unless a new special cause turns up and is removed. This does not mean do nothing in the state of statistical control; it means do not take action on the remaining ups and downs, as to do so would create additional variation and more trouble (see the section on overadjustment, below). The next step is to improve the process, with never-ending effort (Point 5 of the 14 points). Improvement of the process can be pushed effectively, once statistical is achieved and maintained (so stated by Joseph M. Juran many years ago)."

In Deming's view, attempting to "improve" processes that are empirically unstable constitutes what he called "tampering," interventions that typically only serve to make matters worse.

A quick example from my own environmental lab "SPC" experience more than 20 years ago (before indoor plumbing, in IT terms). One of my projects (PDF) involved the development of a computerized system via which to track the accuracy, precision, and stability of the lab's numerous multi-channel radiation detector instruments.

QC personnel ran and recorded daily "sealed source" and "background" checks for every detector, along with QC specimens known as "matrix spikes," "D.I. water spikes," "matrix blanks," "matrix dupes," etc (we were also subject to "blind" QC samples submitted by clients and regulators, posing as production samples). My software calculated the requisite statistics every day (e.g., mean response, std deviation/"coefficient of variation," upper and lower "warning" and "control limits," and linear regression trend slope), updating them with each new data point (through the first 20 entries, that is). Statistical "outliers" would then be readily apparent, and any out-of-calibration "drift" would become apparent via the fitted "slope." In the foregoing example, the detector looks to be "stable," evincing a random daily variablity of about 1% of the average.

Below, same chart, annotated to call attention to a couple of early potential "mini-trends." Across the initial 20 or data entries, it appeared that the detector might be trending sharply down, and, shortly thereafter, after the "dive" had abated, there was a downward "run" of six consecutive entries (the red oval).

Given that our lab hewed to "forensic standards," owing to the fact that much of our work was destined for use as evidence in contamination/dose exposure litigation, we tended to be hyper-vigilant when it came to QC. Nonetheless, textbook "applied process control statistics" can't do your thinking for you. Should detector LB5100/TA/002 have been taken offline for intervention after the first 20 accrued data points? Or, in the wake of a subsequent negative "run" of six daily results? Obviously, our lab's Technical Director didn't think so, and the overall plot tended to back his sanguinity.** No SPC "outliers" and about a 1% or less daily average variation across nearly three months; what's not to love? (LOL, such was not always the case.)

** In this case, you bring to bear contextual expert judgment. Like an aircraft pilot cross-referencing her instrument panel under IFR conditions for accurate and safe flight absent the ability to see outside the cockpit, our lab manager would take into account not only the daily sealed-source detector disintegration "counts," but also the correlative results of routine bench-chem derived lab QC samples (the breadth of spikes and dupes inserted into the production stream). Morever, expert judgment also informs that, given the stochastic nature of radionuclide decay eminations (all of them half-life "decay-corrected" in our system btw), were you to re-run sealed sources multiple times per day (not practical), you would still see random intra-day variation.

Statistical estimates are tools. They don't properly replace domain knowledge and experience. (Moreover, just because Excel now enables you to effortlessly fit an "nth-degree" polynomial to a scatter of a handful of data points -- replete with a nicely displayed equation and impressive R-Squared stat -- doesn't mean you should.)

Those were fun times. And, I have tried to internalize all of the "lessons learned," foremost among them that, should you fail to discover (or you otherwise ignore) knowable "special causes" impacting a process and rectify them, your efforts at "improvement" way well be counterproductive.

But, as I now begin to actually work with my small outpatient primary care clinics doing "workflow analysis and re-design," though, I know I'm not even going to get close to such a level of rigorous statistical process analysis. Most of our workflow assessments are necessarily bound to be mostly "qualitative" (and MU compliance driven, at least across the Stage One period).

I guess we'll just take our "wins" where we can get them. Still, I remain drawn to true PDSA "lean" process improvement principles and procedures, e.g., as set forth by leading organizations such as the Thedacare Center for Healthcare Value:

"The A3 method assures that the PDSA cycle is followed and the changes are monitored. The process steps can be documented in a variety of formats, but it typically includes the following elements, on a single piece of paper. A3 refers to the standardized paper size of 11” x 17”.

- Title – Names the problem, issue, or topic

- Owner/Date – Identifies who owns the problem or issue and the date of the latest revision

- Background – Why is this important? What background information is important? What have we seen in gemba?

- Current Conditions – Show the current state using pictures, graphs, data, etc. What is the problem?

- Goals/Targets – What results do you expect? What are the key measures? (quality, cost, morale, delivery, access, etc.)

- Analysis – What is the root cause(s) of the problem? If you work to eliminate this root cause, will you make progress toward solving the problem?

- Countermeasures – What proposed actions do you intend to take to reach the target condition? How will you show how your countermeasure will address the root causes of the problem? What is the new standard process?

- Implementation – What needs to be done? Who will do it? By when? What are the performance indicators to show progress? How will people be trained in the new process?

- Follow Up – What issues can be anticipated? How will you capture and share learning? How will you continuously improve or begin the cycle again (PDSA)?"

___

MORE ON CLINICAL "SCIENCE"

One place I wherein routinely hang out.

These people are hardcore. (I have to admit to some episodic "scientism" wariness when reading some of their content). Among other topics, there's an ongoing debate at SBM regarding the epistemological and utilitarian differences (if any) between "EBM" (Evidence-Based Medicine) and "SBM" (Science-Based Medicine). As we ponder expanded "CER" (Comparative Effectiveness Research) as envisioned to be facilitated by ever more widespread HIT adoption, I would expect to see some heated debate at SBM on the subject (CER is already much unloved in other curmudgeonly clinical circles).

___

BTW: ONC now has a blog.

Worth visiting and perusing.

QUICK UPDATE:

ANOTHER NICE RESOURCE I JUST FOUND AND JOINED

Very nice topical podcasts. Two thumbs up.

___

I read a very interesting post the other day, "The Fine Print," written by one of my favorite heath care blogging colleagues, Margalit Gur-Arie, regarding the

I read a very interesting post the other day, "The Fine Print," written by one of my favorite heath care blogging colleagues, Margalit Gur-Arie, regarding the While those days were a time prior to indoor plumbing in IT terms, some of the points still resonate. As I began the paper:

While those days were a time prior to indoor plumbing in IT terms, some of the points still resonate. As I began the paper: Twenty three years later, the core liability concerns remain. And, still, the "user assumes -- usually unwittingly -- the cumulative responsibility for assuring a quality output."

Twenty three years later, the core liability concerns remain. And, still, the "user assumes -- usually unwittingly -- the cumulative responsibility for assuring a quality output."

Nonetheless, the systems are indeed extremely transactionally complex, and, absent thorough and consistent QA (including industry consensus stds? FDA oversight?), they could be vulnerable to a host of "gremlins," the upshot of which could range from the merely exasperating-to-the-workflow to the punitive "joint-and-several liability" class-action judgment in the wake of patient harms.

Nonetheless, the systems are indeed extremely transactionally complex, and, absent thorough and consistent QA (including industry consensus stds? FDA oversight?), they could be vulnerable to a host of "gremlins," the upshot of which could range from the merely exasperating-to-the-workflow to the punitive "joint-and-several liability" class-action judgment in the wake of patient harms.

I should have cited this by now. Just slipped my attention. It antedates both the Brouillard and NEJM pieces. It's the first place wherein I ran across a call for FDA (or some federal entity) regulation of EHRs.

I should have cited this by now. Just slipped my attention. It antedates both the Brouillard and NEJM pieces. It's the first place wherein I ran across a call for FDA (or some federal entity) regulation of EHRs.